SA-EgoGS: Sharpness-Aware Dynamic Gaussian Splatting for High-Fidelity Egocentric Interaction

Sharpness-aware dynamic 3D Gaussian Splatting for robust egocentric scene reconstruction with anti-aliasing and uncertainty-aware densification.

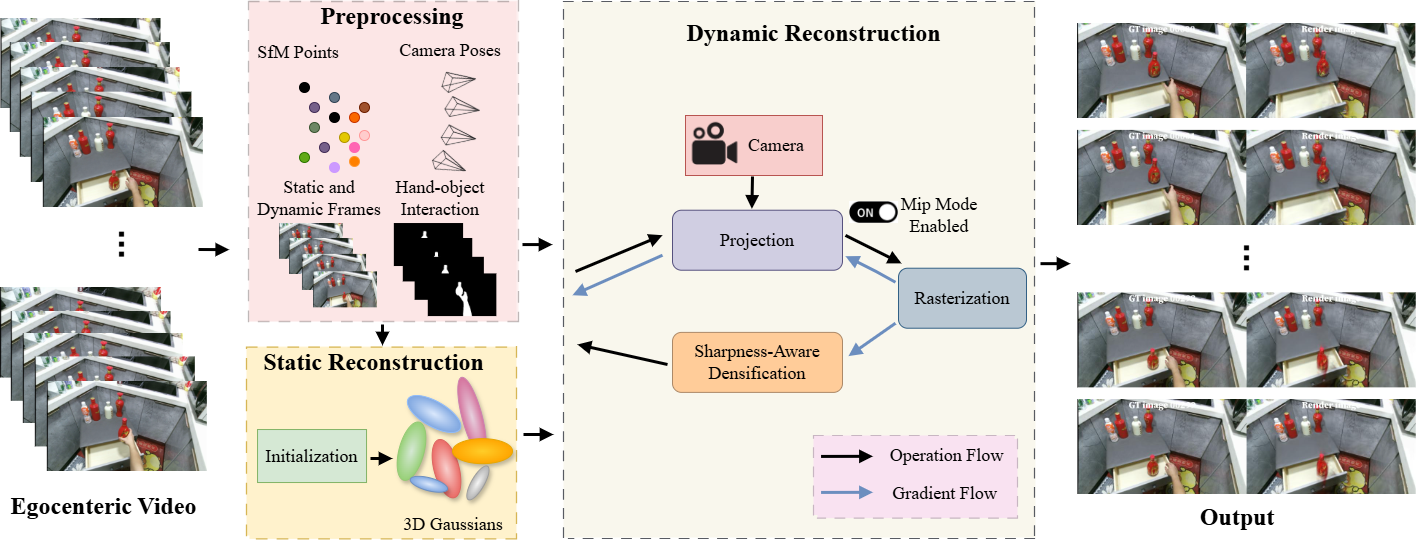

Overview

SA-EgoGS presents complementary mechanisms that improve the robustness of Gaussian-based dynamic reconstruction under egocentric capture conditions. The framework introduces sharpness-aware densification (SHAD) to prioritize high-frequency supervision in the presence of motion blur, scale-aware opacity capping (SAOC) to stabilize multi-scale alpha compositing, and Mip-style prefiltering (MSP) to reduce aliasing during dynamic rendering. Extensive experiments on HOI4D and EPIC-KITCHENS benchmarks demonstrate consistent improvements in spatial accuracy, perceptual quality, and temporal coherence over state-of-the-art methods.

Architecture

Novel Contributions

3D Computer Vision

Sharpness-Aware Densification (SHAD)

Prioritizes high-frequency supervision signals during Gaussian densification, counteracting the blur bias inherent in motion-heavy egocentric video.

Scale-Aware Opacity Capping (SAOC)

Stabilizes alpha compositing behavior across varying scales by dynamically capping Gaussian opacity based on projected footprint size.

Mip-Style Prefiltering (MSP)

Applies multi-scale prefiltering to Gaussian primitives during rendering, reducing aliasing artifacts in dynamic egocentric scenes.

Technology Stack

Related Publications

SA-EgoGS: Sharpness-Aware Dynamic Gaussian Splatting for High-Fidelity Egocentric Interaction

Goshu, H.L., Wakjira, T.G., Lam, K.M. & Fouda, M.M.

Under Review (2025)

Interested in This Research?

For code access, collaboration opportunities, or questions about this project, please contact the PI directly.